Overview

Cisco routers are one of the most widely deployed WAN devices. Traditionally they are individually managed and for the larger networks, administrators require additional tools to monitor, perform configuration backup, and to automate tasks.

Many newer Cisco technologies have some form of a central controller and managed data-plane devices. For example, ACI in the data center and SD-Access for the campus. In WAN space, the Cisco portfolio included IWAN (Intelligent WAN) technology and cloud-managed products from Meraki acquisition. In 2017 Cisco has acquired Viptela and its SD-WAN product line. This post contains an overview of this technology and some basic terminology.

Traditional WAN design

To understand the benefits of SD-WAN, let’s consider how most of the Wide Area Networks are designed. Multiple branch offices connect via an MPLS network to one or two data centers, which also provide centralized Internet access. It is secured by high-performance firewalls, intrusion protection, and web filtering platforms. Each branch or remote office has a single or pair of routers forwarding multiple types of traffic, such as:

- Business applications (SAP, ERP)

- Office 365 (Outlook, Sharepoint, etc)

- Internet browsing

- Video and IP telephony

- Interactive applications, such as remote desktops

Management and Operational Issues

The device-centric approach has many challenges. For example, application performance troubleshooting requires an administrator to check every router in path hop-by-hop and takes a significant amount of time.

In many WAN environments, quality of service (QoS) configuration is static in nature, as a change in QoS design may take several maintenance windows to deploy across the network.

In a similar way, wireless deployments have transformed from autonomous to controller-based, as many tasks require a coordinated approach in management. For example, Radio Resource Management is one of such tasks, when the channel and transmit power selection is very difficult to maintain manually on every access point.

WAN links are also relatively expensive. In many networks, standby WAN links are required for high availability. Establishing these links takes time and service providers may require fixed-term commitment. In contrast, Internet links are affordable and have shorter lead times to provision.

With traditional design described earlier, traffic going to the workload and applications in a data center has to compete with the services reachable via the public Internet. It is cost-effective to offload Internet traffic to a branch local Internet link.

This interface can also be used as a secondary WAN link connecting sites over VPN connections. However, it is difficult to manage multiple tunnels as the number of routers goes up while providing consistent user experience and ensuring that the security is not compromised.

SD-WAN Design Approach

SD-WAN addresses these issues. A centralized set of controller devices provides a level of abstraction, so network administrators can spend more time on creating policies and configuration templates without having to touch every device on the network.

WAN is treated as a transport-agnostic fabric. Underlay network provides connectivity between tunnel endpoints and doesn’t need to have knowledge about reachability information behind these gateways. As a result, overlay tunnels can be created dynamically and networks can recognize application traffic and select the best path in real-time.

Components and Architecture

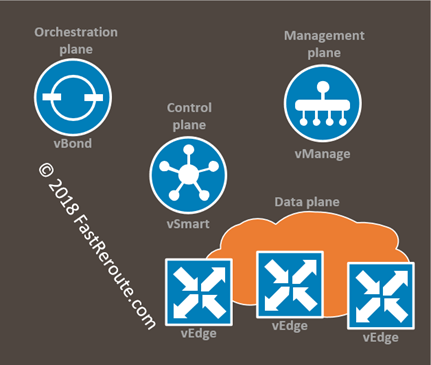

SD-WAN operations comprise of 4 planes, implemented by a set of controllers and gateways:

- Management plane controller (vManage)

- Orchestration plane controller (vBond)

- Control plane controller (vSmart)

- Data plane forwarding device (vEdge)

Controllers can be hosted and managed by Cisco as a subscription-based product or can be deployed on-premises. vManage, vBond, and vSmart are virtual machines available for download as OVA files. ESXi and KVM are the supported hypervisors.

The first component to be configured in a new SD-WAN network is vManage, which can be deployed as a single appliance or cluster of at least 3 nodes. vManage implements a management plane and is the place where all configuration happens. It also performs fabric monitoring and can expose centralized API access for external applications to the SD-WAN network.

vBond is responsible for accepting registration and authenticating vSmart controllers and vEdges. Every device needs to be pointed to vBond during provisioning. It then ensures that all other elements are able to locate each other. vBond must have a public IP address and should be placed into DMZ, so it can be accessed over the Internet.

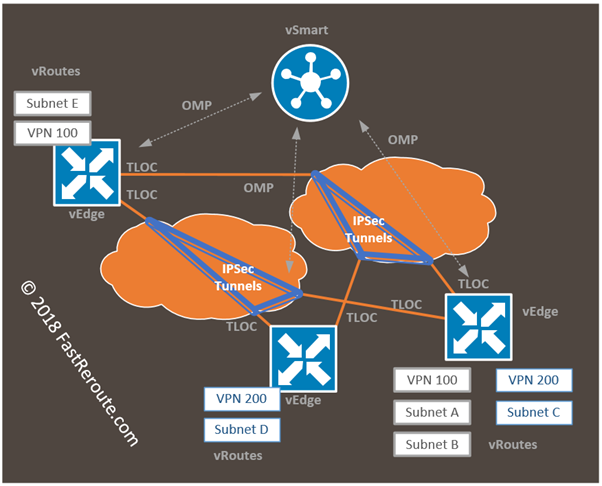

vSmart controls all overlay routing and secure tunnel establishment between vEdges. The control protocol between vSmart and vEdge elements is called OMP (Overlay Management Protocol). It is protected by DTLS and carries not only reachability information, but also security associations details for IPSec tunnels. vSmart performs policy propagation to the edge devices.

vEdge devices are gateways performing data forwarding over overlay networks. This can be Viptela appliances (vEdge Routers), or Cisco devices running SD-WAN image such as Cisco ISR 4000. There is an option of software vEdge Cloud routers hosted in the public cloud – AWS or Azure.

Cisco works on getting routers with SD-WAN image to have feature parity with Viptela appliances, so always check release notes, as there might be a feature not yet supported on Cisco ISRs.

The next few sections explain the most important terms and concepts of SD-WAN, such as VPNs, TLOCs, and OMP.

VPNs

Viptela SD-WAN uses the concept of VPN which is a way to segregate networks. Each VPN has interface allocation and a routing table isolated from other VPNs. It is similar to the Cisco VRF (Virtual Routing and Forwarding) instance. VPN number is globally significant and must match for communication to happen. Encapsulated IP packets carry VPN tag, so egress gateway can determine which VPN packet belongs to.

There are 513 VPNs with the first and last reserved for fabric operations. VPN 0 is transport VPN and is similar to the global VRF context. Interfaces in VPN 0 are called tunnel interfaces and have IP addresses visible by transit networks and form underlay of the fabric. Communication between the network controllers of SD-WAN happens over VPN 0.

VPN 512 is used for Out-Of-Band-Management network.

All other VPNs 1-511 can be used to forward user data.

In Figure 2, VPN 100 and VPN 200 are created in the network. Subnets A, B, and E can communicate with each other within VPN 100. And subnets C and D can communicate with each other within VPN 200.

TLOCs (Transport LOCators)

One of the tasks of OMP is to distribute reachability information. Each destination can be reachable via a specific interface on one of the vEdges on the network. TLOC is a composite structure describing this interface and consists of:

- System IP address of the OMP

- Color of the link

- Encapsulation of the tunnel (IPSec or GRE)

TLOC is similar in concept to the next hop in BGP. Color is a pre-defined tag that describes type of the WAN interface, for example mpls, 3g or biz-internet.

OMP (Overlay Management Protocol)

vSmart exchanges information with vEdges using OMP. This protocol covers all control-plane aspects required to transmit data on top of the overlays.

OMP is responsible for exchange of 3 types of routes:

- vRoutes, reachability on the LAN side of the router. vEdge supports static routes, dynamic protocols – BGP and OSPF are supported. Information about a source routing protocol, its metric is carried along with these routes. VPN, the Site ID is another important information present in vRoutes as well.

- Service Routes. The way to perform service chaining and insert a firewall or a load balancer

- TLOC Routes. Carries information on how to reach specific TLOC such as IP addresses of the interface.